Features · Getting started · Documentation · Learn more about benchmarking

Although many web performance tests use ApacheBench from Apache to generate HTTP requests, we now use Wrk for this project. ApacheBench remains a single-threaded tool, meaning that for higher-performance test scenarios, ApacheBench itself is a limiting factor. HTTP (S) Benchmark Tools ab – slow and single threaded, written in C ali – Generate HTTP load and plot the results in real-time, written in Go (golang) apib – most of the features of ApacheBench (ab), also designed as a more modern replacement, written in C. Benchmark provides proven roof and pavement consulting services for companies with multiple facilities and complex assets. Our technical expertise and unbiased approach have made us a nationwide leader in roof and pavement evaluation, design and construction phase services. Leading English furniture maker with a deep rooted belief in the value of craft and craftsmanship and a responsible and sustainable approach at the heart of everything they do. Contemporary furniture collection and bespoke furniture all made from the best quality natural materials in their own workshops.

BenchmarkDotNet helps you to transform methods into benchmarks, track their performance, and share reproducible measurement experiments.It's no harder than writing unit tests!Under the hood, it performs a lot of magic that guarantees reliable and precise results thanks to the perfolizer statistical engine.BenchmarkDotNet protects you from popular benchmarking mistakes and warns you if something is wrong with your benchmark design or obtained measurements.The results are presented in a user-friendly form that highlights all the important facts about your experiment.The library is adopted by 3800+ projects including .NET Runtime and supported by the .NET Foundation.

It's easy to start writing benchmarks, check out an example(copy-pastable version is here):

BenchmarkDotNet automaticallyruns the benchmarks on all the runtimes,aggregates the measurements,and prints a summary table with the most important information:

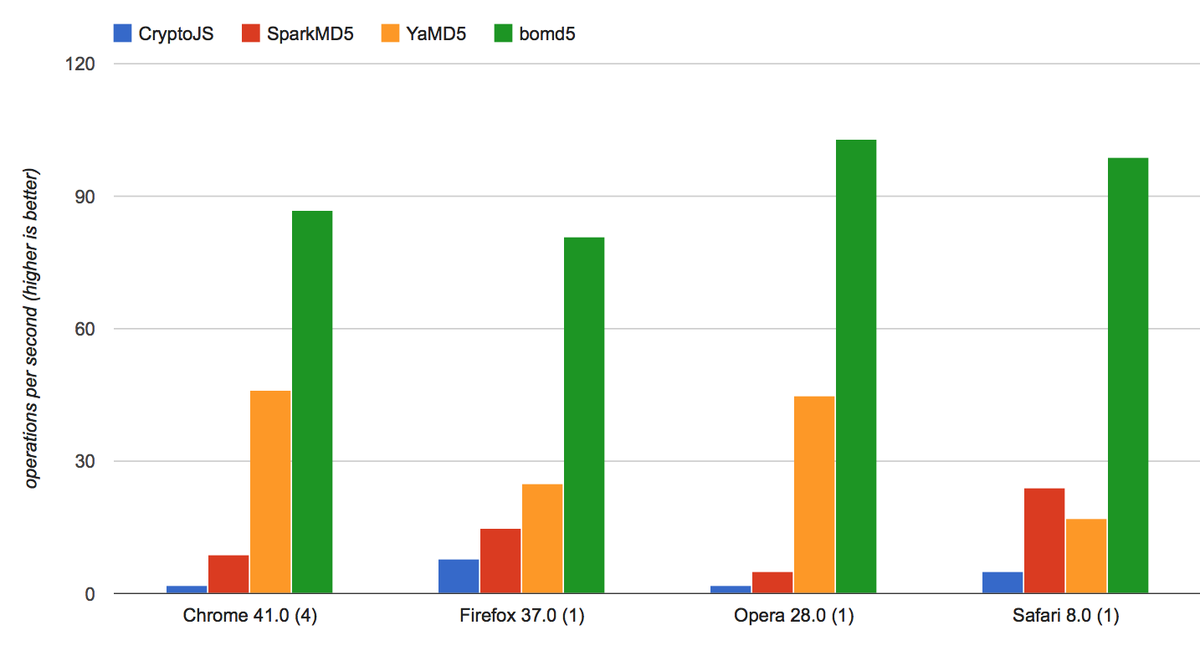

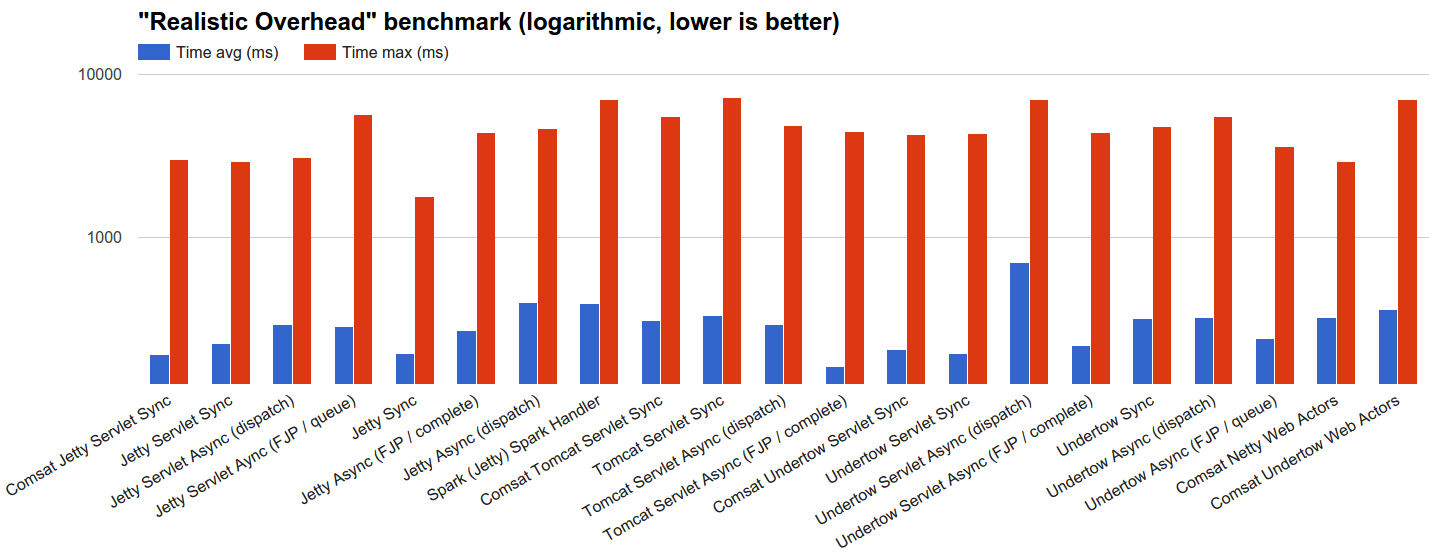

The measured data can be exported to different formats (md, html, csv, xml, json, etc.) including plots:

Supported runtimes: .NET 5+, .NET Framework 4.6.1+, .NET Core 2.0+, Mono, CoreRT

Supported languages: C#, F#, Visual Basic

Supported OS: Windows, Linux, macOS

Features

BenchmarkDotNet has tons of features that are essential in comprehensive performance investigations.Four aspects define the design of these features:simplicity, automation, reliability, and friendliness.

Benchmark Mortgage Loan Administration

Simplicity

You shouldn't be an experience performance engineer if you want to write benchmarks.You can design very complicated performance experiments in the declarative style using simple APIs.

For example, if you want to parameterize your benchmark,mark a field or a property with [Params(1, 2, 3)]: BenchmarkDotNet will enumerate all of the specified valuesand run benchmarks for each case.If you want to compare benchmarks with each other,mark one of the benchmark as the baselinevia [Benchmark(baseline: true)]: BenchmarkDotNet will compare it with all of the other benchmarks.If you want to compare performance in different environments, use jobs.For example, you can run all the benchmarks on .NET Core 3.0 and Mono via[SimpleJob(RuntimeMoniker.NetCoreApp30)] and [SimpleJob(RuntimeMoniker.Mono)].

If you don't like attributes, you can call most of the APIs via the fluent style and write code like this:

If you prefer command-line experience, you can configure your benchmarks viathe console argumentsin any console application or use.NET Core command-line toolto run benchmarks from any dll:

Automation

Reliable benchmarks always include a lot of boilerplate code.

Let's think about what should you do in a typical case.First, you should perform a pilot experiment and determine the best number of method invocations.Next, you should execute several warm-up iterations and ensure that your benchmark achieved a steady state.After that, you should execute the main iterations and calculate some basic statistics.If you calculate some values in your benchmark, you should use it somehow to prevent the dead code elimination.If you use loops, you should care about an effect of the loop unrolling on your results(which may depend on the processor architecture).Once you get results, you should check for some special properties of the obtained performance distributionlike multimodality or extremely high outliers.You should also evaluate the overhead of your infrastructure and deduct it from your results.If you want to test several environments, you should perform the measurements in each of them and manually aggregate the results.

If you write this code from scratch, it's easy to make a mistake and spoil your measurements.Note that it's a shortened version of the full checklist that you should follow during benchmarking:there are a lot of additional hidden pitfalls that should be handled appropriately.Fortunately, you shouldn't worry about it becauseBenchmarkDotNet will do this boring and time-consuming stuff for you.

Moreover, the library can help you with some advanced tasks that you may want to perform during the investigation.For example,BenchmarkDotNet can measure the managed andnative memory trafficand print disassembly listings for your benchmarks.

Reliability

A lot of hand-written benchmarks produce wrong numbers that lead to incorrect business decisions.BenchmarkDotNet protects you from most of the benchmarking pitfalls and allows achieving high measurement precision.

You shouldn't worry about the perfect number of method invocation, the number of warm-up and actual iterations:BenchmarkDotNet tries to choose the best benchmarking parameters andachieve a good trade-off between the measurement prevision and the total duration of all benchmark runs.So, you shouldn't use any magic numbers (like 'We should perform 100 iterations here'),the library will do it for you based on the values of statistical metrics.

BenchmarkDotNet also prevents benchmarking of non-optimized assemblies that was built using DEBUG mode becausethe corresponding results will be unreliable.It will print a warning you if you have an attached debugger,if you use hypervisor (HyperV, VMware, VirtualBox),or if you have any other problems with the current environment.

During 6+ years of development, we faced dozens of different problems that may spoil your measurements.Inside BenchmarkDotNet, there are a lot of heuristics, checks, hacks, and tricks that help you toincrease the reliability of the results.

Friendliness

Analysis of performance data is a time-consuming activity that requires attentiveness, knowledge, and experience.BenchmarkDotNet performs the main part of this analysis for you and presents results in a user-friendly form.

After the experiments, you get a summary table that contains a lot of useful data about the executed benchmarks.By default, it includes only the most important columns,but they can be easily customized.The column set is adaptive and depends on the benchmark definition and measured values.For example, if you mark one of the benchmarks as a baseline,you will get additional columns that will help you to compare all the benchmarks with the baseline.By default, it always shows the Mean column,but if we detected a vast difference between the Mean and the Median values,both columns will be presented.

BenchmarkDotNet tries to find some unusual properties of your performance distributions and prints nice messages about it.For example, it will warn you in case of multimodal distribution or high outliers.In this case, you can scroll the results up and check out ASCII-style histograms for each distributionor generate beautiful png plots using [RPlotExporter].

BenchmarkDotNet doesn't overload you with data; it shows only the essential information depending on your results:it allows you to keep summary small for primitive cases and extend it only for the complicated cases.Of course, you can request any additional statistics and visualizations manually.If you don't customize the summary view,the default presentation will be as much user-friendly as possible. :)

Who use BenchmarkDotNet?

Everyone!BenchmarkDotNet is already adopted by more than 3800+ projects includingdotnet/performance (reference benchmarks for all .NET Runtimes),dotnet/runtime (.NET Core runtime and libraries),Roslyn (C# and Visual Basic compiler),Mono,ASP.NET Core,ML.NET,Entity Framework Core,SignalR,F#,Orleans,Newtonsoft.Json,Elasticsearch.Net,Dapper,Expecto,Accord.NET,ImageSharp,RavenDB,NodaTime,Jint,NServiceBus,Serilog,Autofac,Npgsql,Avalonia,ReactiveUI,SharpZipLib,LiteDB,GraphQL for .NET,MediatR,TensorFlow.NET,Apache Thrift.

On GitHub, you can find3000+ issues,1800+ commits, and500,000+ filesthat involve BenchmarkDotNet.

Learn more about benchmarking

BenchmarkDotNet is not a silver bullet that magically makes all of your benchmarks correct and analyzes the measurements for you.Even if you use this library, you still should know how to design the benchmark experiments and how to make correct conclusions based on the raw data.If you want to know more about benchmarking methodology and good practices,it's recommended to read a book by Andrey Akinshin (the BenchmarkDotNet project lead): 'Pro .NET Benchmarking'.Use this in-depth guide to correctly design benchmarks, measure key performance metrics of .NET applications, and analyze results.This book presents dozens of case studies to help you understand complicated benchmarking topics.You will avoid common pitfalls, control the accuracy of your measurements, and improve the performance of your software.

Build status

| Build server | Platform | Build status |

|---|---|---|

| Azure Pipelines | Windows | |

| Azure Pipelines | Ubuntu | |

| Azure Pipelines | macOS | |

| AppVeyor | Windows | |

| Travis | Linux | |

| Travis | macOS |

Contributions are welcome!

BenchmarkDotNet is already a stable full-featured library that allows performing performance investigation on a professional level.And it continues to evolve!We add new features all the time, but we have too many new cool ideas.Any help will be appreciated.You can develop new features, fix bugs, improve the documentation, or do some other cool stuff.

If you want to contribute, check out theContributing guide andup-for-grabs issues.If you have new ideas or want to complain about bugs, feel free to create a new issue.Let's build the best tool for benchmarking together!

Code of Conduct

This project has adopted the code of conduct defined by the Contributor Covenantto clarify expected behavior in our community.For more information, see the .NET Foundation Code of Conduct.

We know time is your most precious resource. You shouldn't have to waste it fussing with complicated email platforms.

Benchmark Email makes the tools you need simple, so you can get back to building relationships, accelerating your business and raising the bar.

More efficiently leverage your most valuable marketing assets: your growing audience.

Invest in stronger relationships with your contacts.

Stop wasting time on email marketing and get back to business in three steps:

Sign up free

Try out Benchmark for free to discover how simple effective email marketing can be.

Explore your options

Benchmark has a suite of tools designed to keep up with your drive, including contact management.

Expanding your business

Take full advantage of Benchmark’s capabilities by upgrading to Pro and see just how much using the right tools can impact your company’s growth.

Http Benchmark Rust

Benchmark has made it a quick and easy process for us to create professional-looking emails to keep our customers and interested parties engaged.”

— Gavin Wieske, Marketing Manager | Sandbox

Don't lose any more of your valuable time with setup and customization.

Benchmark

Sign up for Benchmark today to stay focused on the reason you're using email marketing in the first place: bringing your vision to life.